Universal synthetic data using neural network based cellular automata

The models want to learn. The models want data. The models want exposure to complex, but not chaotic data.

Why don’t we start with a random deep neural network to represent the rule and transformation of cells on a grid? A neural cellular automata. We can then train a transformer on the resultant vectors representing the states of the grid at each time step.

We then run a genetic evolution step on the random networks. We use a combination of a macro network loss, that trains on all grids seen thus far to represent novelty, and a new network’s loss to represent learnability, as fitness.

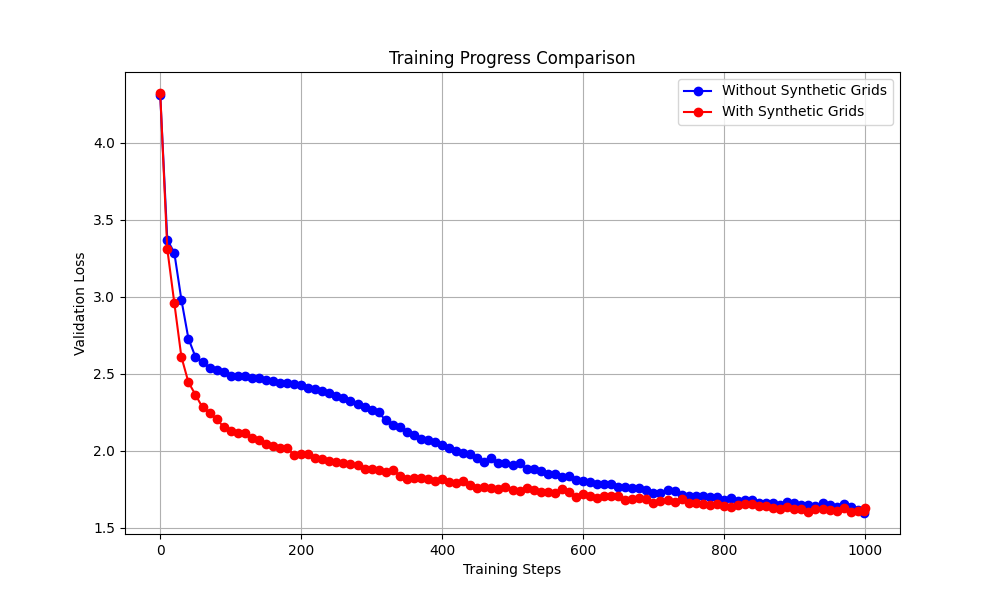

I tried a form of this, and it appeared to greatly accelerate learning even on language data. Perhaps this was simply due to to pretraining induction heads, I’m not sure.